A long time ago, I used to play with search engines all the time, particularly in the context of bounded search, (that is, search over a particular set of web pages of web domains, e.g. Search Hubs and Custom Search at ILI2007). Although I’m not at IWMW this year, I can’t not have an IWMW related tinker, so here’s a quick play around IWMW related twittering folk…

To start with, let’s have a look at the IWMW Twitter account:

We see there are several twitter lists associated with the account, including one for participants…

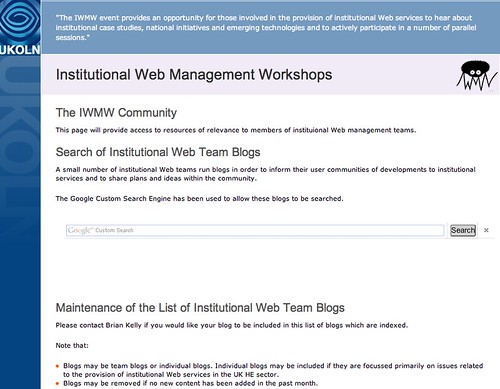

Looking around the IWMW10 website, I also spy a community area, with a Google Custom search engine that searches over institutional web management blogs that @briankelly, I presume, knows about:

It seems a bit of a pain to manage though… “Please contact Brian Kelly if you would like your blog to be included in this list of blogs which are indexed”

Ever one to take the lazy approach, I wondered whether we could create a useful search engine around the URLs disclosed on the public Twitter profile page of folk listed on the various IWMW Twitter lists. The answer is “not necessarily”, because the URLs folk have posted on their Twitter profiles seem to point all over the place, but it’s easy enough to demonstrate the raw principle.

So here’s the recipe:

– find a Twitter list with interesting folk on it;

– use the Twitter API to grab the list of members on a list;

– the results include profile information of everyone on the list – including the URL they specified as a home page in their profile;

– grab the URLs and generate an annotations file that can be used to import the URLs into a Google Custom Search Engine;

– note that the annotations file should include a label identifier that specifies which CSE should draw on the annotations:

Once the file is uploaded, you should have a custom search engine built around the URLs folk followed in the twitter list have revealed in their twitter profiles (here’s my IWMW Participants CSE (list date: 12:00 12/7/10)

Note that to create sensibly searchable URLs, I used the heuristics:

– if page URL is example.com or example.com/, search on example.com/*

– by default, if page is example.com/page.foo, just search on that page.

I used Python (badly!;-) and the tweepy library to generate my test CSE annotations feed:

import tweepy

#these are the keys you would normally use with oAuth

consumer_key=''

consumer_secret=''

#these are the special keys for single user apps from http://dev.twitter.com/apps

#as described in http://dev.twitter.com/pages/oauth_single_token

#select your app, then My Access Token from the sidebar

key=''

secret=''

auth = tweepy.OAuthHandler(consumer_key, consumer_secret)

auth.set_access_token(key, secret)

api = tweepy.API(auth)

#this identifier is the identifier of the Google CSE you want to populate

cseLabelFromGoogle=''

listowner='iwmw'

tag='iwmw10participant'

auth = tweepy.BasicAuthHandler(accountName, password)

api = tweepy.API(auth)

f=open(tag+'listhomepages.xml','w')

cse=cseLabelFromGoogle

f.write("<GoogleCustomizations>\n\t<Annotations>\n")

#use the Cursor object so we can iterate through the whole list

for un in tweepy.Cursor(api.list_members,owner=listowner,slug=tag).items():

if type(un) is tweepy.models.User:

l=un.url

if l:

l=l.replace("http://","")

if not l.endswith('/'):

l=l+"/*"

else:

if l[-1]=="/":

l=l+"*"

f.write("\t\t<Annotation about=\""+l+"\" score=\"1\">\n")

f.write("\t\t\t<Label name=\""+cse+"\"/>\n")

f.write("\t\t</Annotation>\n")

f.write("\t</Annotations>\n</GoogleCustomizations>")

f.close()

(Here’s the code as a gist, with tweaks so it runs with oAUth.)

Running this code generates a file (listhomepages.xm) that contains Google custom search annotations for a particular Google CSE, based around the URLs declared in the public twitter profiles of people listed in a particular list. This file can then be uploaded to the Google CSE environment and used to help configure a bounded search engine.

So what does this mean? It means that if you have a identified a set of people sharing a particular set of interests using a Twitter list, it’s easy enough to generate a custom search engine around the webpages or domains they have declared in their Twitter profile.

Hello Tony

Sorry not to see you at IWMW 2010. Hope you are flourishing in IoW and Cambridge. Brian has shorter hair this year, but otherwise things are much the same. Our theme is fretting about budget cuts. Between sessions I am reading William Cobbett’s Rural Rides which is rather great and an antidote to digital technology.

BR Amy

Hi Amy

Yes – sad not to see you at IWMW this year (didn’t get my act together in time!); we must grab a coffee somewhen:-)

Thanks for this. VEry good idea. I am working on a program to extract just the usernames from the api.list_members() method. What I cant figure out is how to do that and use the cursor method. I am only getting the first 20 usernames.

Here is my code. The [0] extracts the username from the other data:

the_list = api.list_members(username, tw_list)[0]

for user in the_list:

tweeter = user.screen_name

When i use the Cursor method with that it tells me I cant use pagination with list_method.

Thanks for any help.

Nice idia!

I also made a solution for using Google On The Fly with Delicious tags. You may see my solution at http://pipes.yahoo.com/topicalsearch/delicious_domains