Another of those very buried lede posts…

Over the years, I’ve spent a lot of time pondering the way the OU produces and publishes course materials. The OU is a publisher and a content factory, and many of the production modes model a factory system, not least in terms of the scale of delivery (OU course populations can run at over 1000 students per presentation, and first year undergrad equivalent modules can be presented (in the same form, largely unchanged) twice a year for five years or more.

One of the projects currently being undertaking internally is the intriguingly titled Redesigning Production project, although I still can’t quite make sense (for myself, in terms I understand!) of what the remit or the scope actually is.

Whatever. The project is doing a great job soliciting contributions through online workshops, forums, and the painfully horrible Yammer channel (it demands third party cookies are set and repeatedly prompts me to reauthenticate. With the rest of the university moving gung ho to Teams, that a future looking project is using a deprecated comms channel seems… whatever.) So I’ve been dipping my oar in, pub bore style, with what are probably overbearing and overlong (and maybe out of scope? I can’t fathom it out…) “I remember when”, “why don’t we…” and “so I hacked together this thing for myself” style contributions…

So here’s a little something inspired by a current, and ongoing, discussion about detecting broken links in live course materials: a simple link checker.

# Run a link check on a single link

import requests

def link_reporter(url, display=False, redirect_log=True):

"""Attempt to resolve a URL and report on how it was resolved."""

if display:

print(f"Checking {url}...")

# Make request and follow redirects

r = requests.head(url, allow_redirects=True)

# Optionally create a report including each step of redirection/resolution

steps = r.history + [r] if redirect_log else [r]

report = {'url': url}

step_reports = []

for step in steps:

step_report = (step.ok, step.url, step.status_code, step.reason)

step_reports.append( step_report )

if display:

txt_report = f'\tok={step.ok} :: {step.url} :: {step.status_code} :: {step.reason}\n'

print(txt_report)

return step_reports

That bit of Python code, which took maybe 10 minutes to put together, will take a URL and try ro resolve it, keepng track of any redirects along the way as well as the status from the final page request (for example, whether the page was code 200 successfully loaded or whether a 404 page not found was encountered. Other status messages are also possible.

[UPDATE: I am informed that there is VLE link checker to check module links availabe from the adinstration block on a module’s VLE site. If there is, and I’m looking in the right place, it’s possibly not something I can see or use due to permissioning… I’d be interested to see what sort of report it produces though:-)]

[UPDATE 2: repo for an ou-xml-link-checker; I really should add a link archiver to this too… That’s still TO DO ]

The code is a hacky recipe intended to prove a concept quickly that stands a chance of working at least some of the time. It’s also the sort of thing that could probably be improved on, and evolved, over time. But it works, mostly, now, and could be used by someone who could create their own simple program to take in a set of URLs and iterate through them generating a link report for each of them.

Here’s an example of the style of report it can create using a link that was included in the materials with as a Library proxied link (http://ieeexplore.ieee.org.libezproxy.open.ac.uk/xpl/articleDetails.jsp?arnumber=4376143) that I cleaned to give a none proxied link (note to self: I should perhaps create a flag that identifies links of that type as Library proxied links; and perhaps also flag another link type at least, which are library managed (proxied) links keyed by a link ID value and routed vie https://www.open.ac.uk/libraryservices/resource/website:):

[(True,

'http://ieeexplore.ieee.org/xpl/articleDetails.jsp?arnumber=4376143',

301,

'Moved Permanently'),

(True,

'https://ieeexplore.ieee.org/xpl/articleDetails.jsp?arnumber=4376143',

302,

'Moved Temporarily'),

(True,

'https://ieeexplore.ieee.org/document/4376143/?arnumber=4376143',

200,

'OK')]So.. link checker.

At the moment, module teams manually check links in web materials published on the VLE over many many pages. To check a hundred linkes spread over a hierachical tree of pages to depth two or three takes a lot of time and navigation.

More often than not, dead links are reported by students in module forums. Some links are perhaps never clicked on and have been broken for years, but we wouldn’t know it, or have been clicked on but been unreported. (This raises another question: why do never-clicked links remain in the materials anyway? Reporting about link activity is yet another of those stats we could and should act on internally (course analytics, a quality issue) but we don’t (the institution prefers to try to shape students by tracking them using learning analytics, rather than improving things we have control over using course analytics. We analyse our students, not our materials, even if our students’ performance is shaped by our materials. Go figure.)

This is obviously not a “production” tool, but if you have a set of links from a set of course materials, perhaps collected together in a spreadsheet, and you had a Python code environment, and you were prepared to figure our how to paste a set of URLs into a Python script, and you could figure out a loop to iterate through them and call the link checker, you could automate the link checking process in some sort of fashion.

So: tools to make life easier can be quickly created for, and made available to (and can also be created or extended by) folk with access to certain environments that let them run automation scripts and who have the skills to use the tools provided (or the skills and time to make them for themselves).

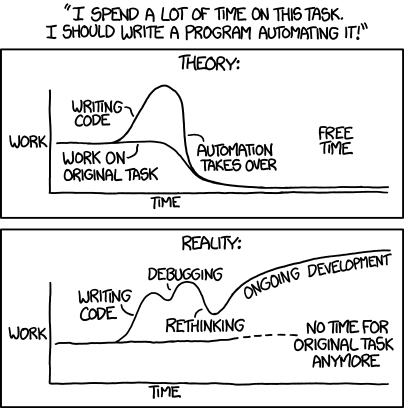

By the by, anyone who has been tempted, or actually attempted, to create their own (end user development) automation tools will know that even though you know it should only take a half hour hack to create a thing, that half an hour is elastic:

Having created that simple link checker fragment to drop into the “broken link” Redesigning Production forum thread, in part to demonstrate that a link checker that works at the protocol level can identify a range of redirects and errors (for example, ‘content not available in your region’ / HTTP 451 Unavailable For Legal Reasons is one that GDPR has resulted in when trying to access various US based news sites), I figured I really should get round to creating a link checker that will trawl through links automatically extracted from one or more OU-XML documents in a local directory. (I did have code to grab OU-XML documents from the VLE, but the OU auth process has changed since I last use that code which means I need to move the scraper from mechanicalsoup to selenium…) You can find the current OU-XML link checker command line tool here: https://github.com/innovationOUtside/ouxml-link-checker

So, we now have a link checker that anyone can use, right? Well, not really… It doesn’t work like that. You can use the link checker if you have Python 3 installed, and you know how to go onto the command line to install the package, and you know what copying the pip install instruction I posted in the Yammer group won’t work because the Github url is shortened by an ellipsis, and if you call “pip” and Python 2 has the focus on pip you’ll get an error, and when you try to run the command to run the link checker on the command line you know how to navigate to, or specify, the path (including paths with spaces…) and you know how to open a CSV file and/or open and make sense of a JSON file with the full report, and you can get copies of the OU-XML files for the materials you are interested in and get them onto a path you can call the link checker command line command with in the first place, then you have access to a link checker.

So this is why it can take months rather than minutes to make tools generally available. Plus there is the issue of scale – what happens if folk on hundreds of OU modules start running link checkers over the full set of links referenced in a each of their courses on a regular basis? If (when) the code breaks parsing a document, or trying to resolve a particular URL, what does the user do then. (The hacker who created it, or anyone else with the requisite skills) could possibly fix the script quite quickly, even if just by adding in an exception handler or excluding particular source documents or URLs and remembering they hadn’t checked those automatically.)

But it does also raise the issue that quick fixes that will save chunks of time that some, maybe even many, eventually, could make use of right now aren’t generally available. So every time a module presents, some poor soul on each module has to manually check, one at a time, potentially hundreds of links in web materials published on the VLE spread over many many pages published in a hierachical tree to depth two or three.

PS As I looked at the link checker today, deciding whether I should post about it, I figured it might also be useful to add in a couple of extra features, specifically a screenshot grabber to grab a snapshot image of the final page retrieved from each link, and a tool to submit the URL to a web archiving service such as the Internet Archive or the UK Web Archive, or create a proxy link to an automatically archived versio of it using something like the Mementoweb Robust Links service. So that’s the tinkering for my next two coffee breaks sorted… And again, I’ll make them generally available in a way that probably isn’t…

And maybe I should also look at more generally adding in a typo and repeated word checker, eg as per More Typo Checking for Jupyter Notebooks — Repeated Words and Grammar Checking?

PPS the quality question of never-clicked links also raises a question that for me would be in scope as a Redesigning Production question and relates to the issue of continual improvement of course material, contrasted with maintenance (fixing broken links or typos that are identified, for example) and update (more significant changes to course materials that may happen after several years to give the course a few more years of life).

Our TM351 Data Managemen and Analysis module has been a rare beast in that we have essentially been engaged in a rolling rewrite of it ever since we first presented it. Each year (it presents once a year), we update the software and reviewing the practical activities distributed via Jupyter notebooks which take up about 40% of the module study time. (Revising the VLE materials is much harder because there is a long, slow production process associated with making those updates. Updating notebooks is handled purely within the module team and without reference to external processes that require scheduling and formal scheduled handovers.)

To my mind, the production process for some modules at least should be capable of supporting continual improvement, and move away from “fixed for three years then significant update” model.