In anticipation of a new R library from School of Data data diva @mihi_tr that will wrap the OpenSpending API and providing access to OpenSpending.org data directly from within R, I thought I’d start doodling around some ideas raised in Identifying Pieces in the Spending Data Jigsaw. In particular, common payment values, repayments/refunds and “balanced payments”, that is, multiple payments where the absolute value of a negative payment matches that of an above zero payment (so far example, -£269.72 and £269.72 would be balanced payments).

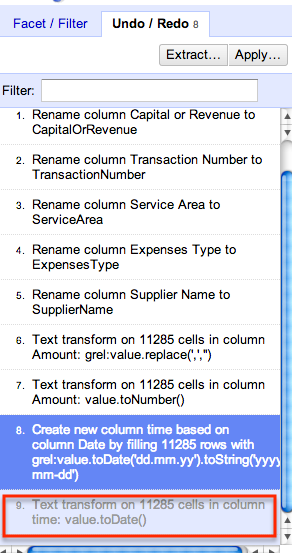

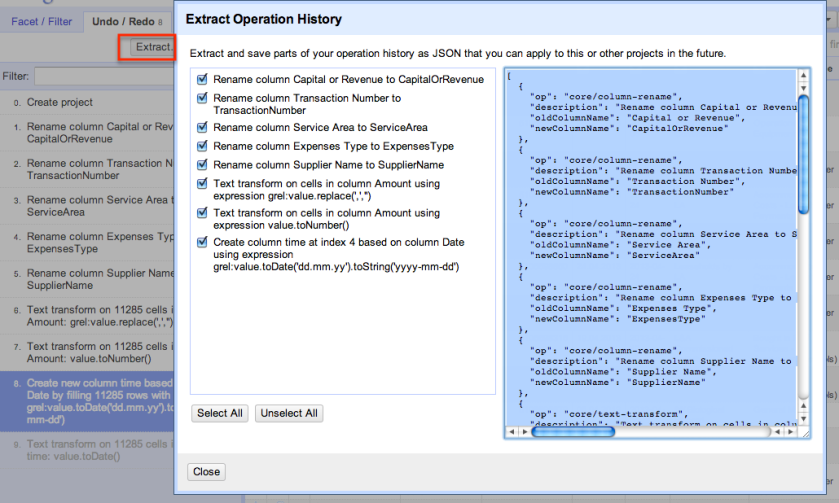

The data I’ve grabbed is Isle of Wight Council transparency (spending) data for the financial year 2012/2013. The data was pulled from the Isle of Wight Council website and cleaned using OpenRefine broadly according to the recipe described in Using OpenRefine to Clean Multiple Documents in the Same Way with a couple of additions: a new SupplierNameClustered column, originally created from the SupplierName column, but then cleaned to remove initials, (eg [SD]), direct debit prefixes (DD- etc) and then clustered using a variety of clustering algorithms; and a new unique index column, created with values '_'+row.index. You can find a copy of the data here.

To start with, let’s see how we can identify “popular” transaction amounts:

#We going to use some rearrangement routines...

library(plyr)

#I'm loading in the data from a locally downloaded copy

iw <- read.csv("~/code/Rcode/eduDemos1/caseStudies/IWspending/IWccl2012_13_TransparencyTest4.csv")

#Display most common payment values

commonAmounts=function(df,bcol='Amount',num=5){

head(arrange(data.frame(table(df[[bcol]])),-Freq),num)

}

ca=commonAmounts(iw)

ca

# Var1 Freq

#1 1567.72 2177

#2 1930.32 1780

#3 1622.6 1347

#4 1998.08 1253

#5 1642.2 1227

This shows that the most common payment by value is for £1567.72. An obvious question to ask here is: does this correspond to some sort of “standard payment” or tariff? And if so, can we reconcile this against the extent of the delivery of a particular level of service, perhaps as disclosed elsewhere?

We can also generate a summary report to show what transactions correspond to this amount, or the most popular amounts.

#We can then get the rows corresponding to these common payments

commonPayments=function(df,bcol,commonAmounts){

df[abs(df[[bcol]]) %in% commonAmounts,]

}

cp.df=commonPayments(iw,"Amount",ca$Var1)

More usefully, we might generate “pivot table” style summaries of how the popular payments breakdown with respect to expenses area, supplier, or some other combination of factors. So for example, we can learn that there were 1261 payments of £1567.72 booked to the EF Residential Care services area, and SOMERSET CARE LTD [SB] received 231 payments of £1567.72. (Reporting by the clustered supplier name is often more useful…)

#R does pivot tables, sort of...

#We can also run summary statistics over those common payment rows.

zz1=aggregate(index ~ ServiceArea + Amount, data =cp.df, FUN="length")

head(arrange(zz1,-index))

# ServiceArea Amount index

#1 EF Residential Care 1567.72 1261

#2 EMI Residential Care 1642.20 659

#3 EMI Residential Care 1930.32 645

#4 EF Residential Care 1930.32 561

#5 EF Residential Care 1622.60 530

#6 Elderly Frail Residential Income 1622.60 36

#Another way of achieving a similar result is using the plyr count() function:

head(arrange(count(cp.df, c('ServiceArea','ExpensesType','Amount')),-freq))

#That is:

zz1.1=count(cp.df, c('ServiceArea','ExpensesType','Amount'))

zz2=aggregate(index ~ ServiceArea +ExpensesType+ Amount, data =cp.df, FUN="length")

head(arrange(zz2,-index))

# ServiceArea ExpensesType Amount

#1 EF Residential Care Chgs from Ind Provs 1567.72

#2 EMI Residential Care Chgs from Ind Provs 1930.32

#3 EMI Residential Care Chgs from Ind Provs 1642.20

#4 EF Residential Care Charges from Independent Providers 1622.60

#...

zz3=aggregate(index ~ SupplierName+ Amount, data =cp.df, FUN="length")

head(arrange(zz3,-index))

# SupplierName Amount index

#1 REDACTED PERSONAL DATA 1567.72 274

#2 SOMERSET CARE LTD [SB] 1567.72 231

#3 ISLAND HEALTHCARE LTD [SB] 1930.32 214

#...

zz4=aggregate(index ~ SupplierNameClustered+ Amount, data =cp.df, FUN="length")

head(arrange(zz4,-index))

If I was a suspicious type, I suppose I might look for suppliers who received one of these popular amounts only once…

Let’s have a search for items declared as overpayments or refunds, perhaps as preliminary work in an investigation about why overpayments are being made…:

#Let's look for overpayments

overpayments=function(df,fcol,bcol='Amount',num=5){

df=subset(df, grepl('((overpay)|(refund))', df[[fcol]],ignore.case=T))

df[ order(df[,bcol]), ]

}

rf=overpayments(iw,'ExpensesType')

#View the largest "refund" transactions

head(subset(arrange(rf,Amount),select=c('Date','ServiceArea','ExpensesType','Amount')))

# Date ServiceArea ExpensesType Amount

#1 2013-03-28 Elderly Mentally Ill Residential Care Provider Refunds -25094.16

#2 2012-07-18 MH Residential Care Refund of Overpaymts -24599.12

#3 2013-03-25 Elderly Mentally Ill Residential Care Provider Refunds -23163.84

#We can also generate a variety of other reports on the "refund" transactions

head(arrange(aggregate(index ~ SupplierName+ ExpensesType+Amount, data =rf, FUN="length"),-index))

# SupplierName ExpensesType Amount index

#1 THE ORCHARD HOUSE CARE HOME Provider Refunds -434.84 8

#2 THE ORCHARD HOUSE CARE HOME Provider Refunds -729.91 3

#3 SCIO HEALTHCARE LTD Provider Refunds -434.84 3

#...

##Which is to say: The Orchard House Care home made 8 refunds of £434.84 and 3 of £729.91

head(arrange(aggregate(index ~ SupplierName+ ExpensesType, data =rf, FUN="length"),-index))

# SupplierName ExpensesType index

#1 SCIO HEALTHCARE LTD Provider Refunds 31

#2 SOMERSET CARE LTD Provider Refunds 31

#3 THE ORCHARD HOUSE CARE HOME Provider Refunds 22

#...

Another area of investigation might be “balanced” payments. Here’s one approach for finding those, based around first identifying negative payments. Let’s also refine the approach in this instance for looking for balanced payments involving the supplier involved in the largest number of negative payments.

##Find which suppliers were involved in most number of negative payments

#Identify negative payments

nn=subset(iw,Amount<=0)

#Count the number of each unique supplier

nnd=data.frame(table(nn$SupplierNameClustered))

#Display the suppliers with the largest count of negative payments

head(arrange(nnd,-Freq))

# Var1 Freq

#1 REDACTED PERSONAL DATA 2773

#2 SOUTHERN ELECTRIC 313

#3 BRITISH GAS BUSINESS 102

#4 SOMERSET CARE LTD 75

#...

Let’s look for balanced payments around SOUTHERN ELECTRIC…

#Specific supplier search

#Limit transactions to just transactions involving this supplier

se=subset(iw, SupplierNameClustered=='SOUTHERN ELECTRIC')

##We can also run partial matching searches...

#sw=subset(iw,grepl('SOUTHERN', iw$SupplierNameClustered))

#Now let's search for balanced payments

balanced.items=function(df,bcol){

#Find the positive amounts

positems=df[ df[[bcol]]>0, ]

#Find the negative amounts

negitems=df[ df[[bcol]]<=0, ]

#Find the absolute unique negative amounts

uniqabsnegitems=-unique(negitems[[bcol]])

#Find the unique positive amounts

uniqpositems=unique(positems[[bcol]])

#Find matching positive and negative amounts

balitems=intersect(uniqabsnegitems,uniqpositems)

#Subset the data based on balanced positive and negative amounts

#bals=subset(se,abs(Amount) %in% balitems)

bals=df[abs(df[[bcol]]) %in% balitems,]

#Group the data by sorting, largest absolute amounts first

bals[ order(-abs(bals[,bcol])), ]

}

dse=balanced.items(se,'Amount')

head(subset(dse,select=c('Directorate','Date','ServiceArea','ExpensesType','Amount')))

# Directorate Date ServiceArea ExpensesType Amount

#20423 Economy & Environment 2012-07-11 Sandown Depot (East Electricity -2770.35

#20424 Economy & Environment 2012-07-11 Sandown Depot (East Electricity 2770.35

#52004 Chief Executive, Schools & Learning 2013-01-30 Haylands - Playstreet Lane Electricity 2511.20

#52008 Chief Executive, Schools & Learning 2013-01-31 Haylands - Playstreet Lane Electricity -2511.20

We can also use graphical techniques to try to spot balanced payments.

#Crude example plot to highlight matched +/1 amounts

se1a=subset(se,ServiceArea=='Ryde Town Hall' & Amount>0)

se1b=subset(se,ServiceArea=='Ryde Town Hall' & Amount<=0)

require(ggplot2)

g=ggplot() + geom_point(data=se1a, aes(x=Date,y=Amount),pch=1,size=1)

g=g+geom_point(data=se1b, aes(x=Date,y=-Amount),size=3,col='red',pch=2)

g=g+ggtitle('Ryde Town Hall - Energy Payments to Southern Electric (red=-Amount)')+xlab(NULL)+ylab('Amount (£)')

g=g+theme(axis.text.x = element_text(angle = 45, hjust = 1))

g

In this first image, we see payments over time – the red markers are “minus” amounts on the negative payments. Notice that in some cases balanced payments seem to appear on the same day. If the y-value of a red and a black marker are the same, they are balanced in value. The x-axis is time. Where there is a period of equally spaced marks over x with the same y-value, this may represent a regular scheduled payment.

g=ggplot(se1a)+geom_point( aes(x=Date,y=Amount),pch=1,size=1)

g=g+geom_point(data=se1b, aes(x=Date,y=-Amount),size=3,col='red',pch=2)

g=g+facet_wrap(~ExpensesType)

g=g+ggtitle('Ryde Town Hall - Energy Payments to Southern Electric (red=-Amount)')

g=g+xlab(NULL)+ylab('Amount (£)')

g=g+theme(axis.text.x = element_text(angle = 45, hjust = 1))

g

We can also facet out the payments by expense type.

Graphical techniques can often help us spot patterns in the data that might be hard to spot just by looking at rows and columns worth of data. In many cases, it is worthwhile developing visual analysis skills to try out quick analyses “by eye” before trying to implement them as more comprehensive analysis scripts.