How can we get a quick snapshot of who’s talking to whom on Twitter in the context of a particular hashtag?

Here’s a quick recipe that shows how…

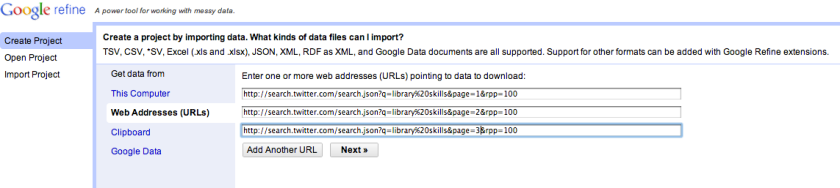

First we need to grab some search data. The Twitter API documentation provides us with some clues about how to construct a web address/URL that will grab results back from a particular search on Twitter in a machine readable way (that is, as data):

- http://search.twitter.com/search.format is the base URL, and the format we require is json, which gives us http://search.twitter.com/search.json

- the query we want is presented using the q= parameter: http://search.twitter.com/search.json?q=searchterm

- if we want multiple search terms (for example, library skills), they need encoding in a particular way. The easiest was is just to construct your URL, enter it into the location/URL bar of your browser and hit enter, or use a service such as this string encoder. The browser should encode the URL for you. (If the only punctuation in your search phrase are spaces, you can encode them yourself: just change each space to %20, to give something like library%20skills. If you want to encode the # in a hashtag, use %23

- We want to get back as many results as are allowed at any one time (which happens to be 100), so set rpp=100, that is: http://search.twitter.com/search.json?q=library%20skills&rpp=100

- results are paged (in the sense of different pages of Google search results, for example), which means we can ask for the first 100 results, the second 100 results and so on as far back as the most recent 1500 tweets (page 15 for rpp=100, or page 30 if we were using rpp=50 (since 15*100 = 30*50 = 1500): http://search.twitter.com/search.json?q=library%20skills&rpp=100&page=1

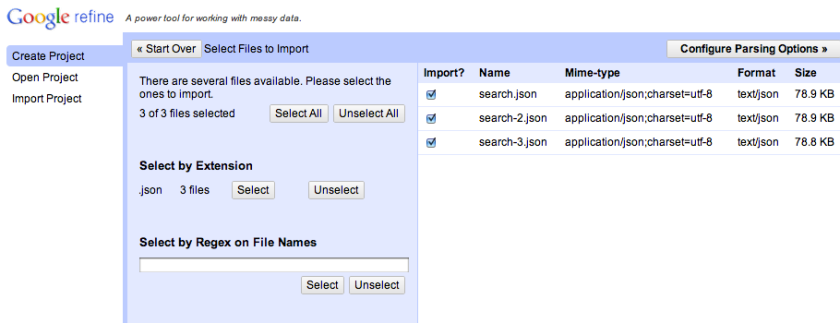

Clicking on Next provides us with a dialogue that will allow us to load the data from the URLs into Google Refine:

Clicking “Configure Parsing Options” loads the data and provides us with a preview of it:

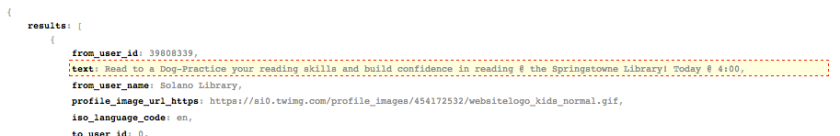

If you inspect the data that is returned, you should see it has a repeating pattern. Hovering over the various elements allows you to identify what repeating part of the result we want to import. For example, we could just import each tweet:

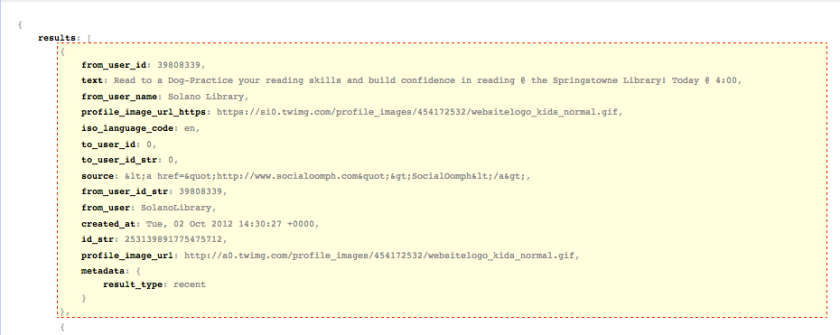

Or we could import all the data fields – let’s grab them all:

If you click the highlighted text, or click “Update Preview View”, you can get a preview of how the data will appear. To return to the selection view, click “Pick Record Nodes”:

“Create Project” actually generates the project and pulls all the data in… The column names are a little messy, but we can tidy those:

Look for the from_user and to_user columns and rename them source and target respectively… (hovering over a column name pops up tooltip that shows the full column name):

For the example I’m going to describe, we don’t actually need to rename the columns, but it’s handy to know how to do it;-)

We can now filter out all the rows with a “null” value in the target column. It seems a bit fiddly at first, but you soon get used to the procedure… Select the text facet to pop up a window that show the unique elements in the target column and how often they occur. Sort the list by count, and click on the “null” element – it should be highlighted and its setting should appear as “exclude”. The column will now be showing elements in the column that have the null value:

Click on the “Invert” option and the column will now filter out all the “null” elements and only show the elements that have a non-null value – that is, tweets that have a “to_user” value (which is to say, those tweets were sent to a particular user). Here’s what we get:

Let’s now export the source and target data so we can get it into Gephi:

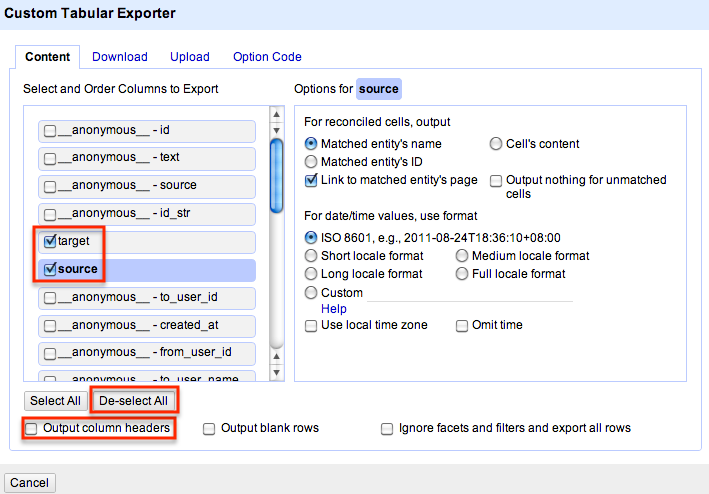

Deselect all the columns, and then select source and target columns; also deselect the ‘output column headers’ – we don’t need headers where this file is going…

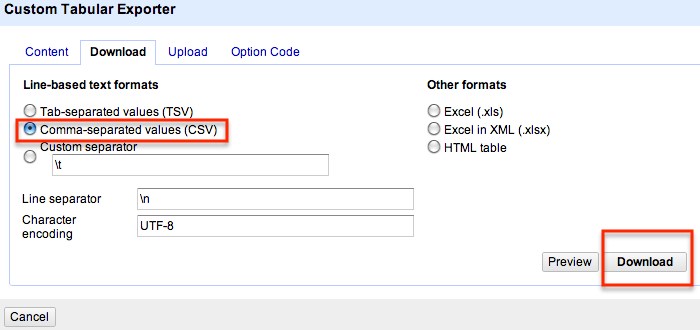

Export the custom layout as CSV data:

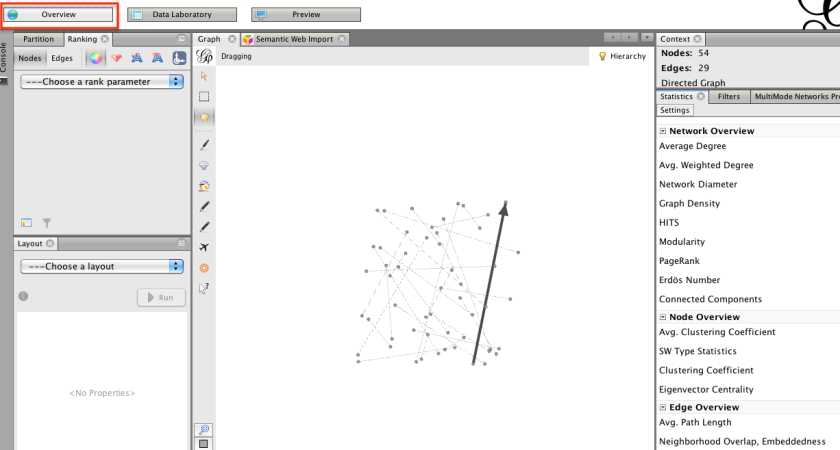

We can now import this data into another application – Gephi. Gephi is a cross platform package for visualising networks. In the simplest case, it can import two column data files where each row represents two things that are connected to each other. In our case, we have connections between “source” and “target” Twitter names – that is, connections that show when one Twitter user in our search sample has sent a message to another.

Launch Gephi and from the file menu, open the file you exported from Google Refine:

We’ve now got our data into Gephi, where we can start to visualise it…

…but that is a post for another day… (or if you’re impatient, you can find some examples of how to drive Gephi here).

The column names will be a little more manageable in Refine 2.6 (already in current SVN). Instead of __anonymous__ or whatever they are, you’ll just have a single _ for anonymous leaves in the tree (e.g. JSON arrays). I think we also elided the anonymous root node, so there’ll be one less level.

Thanks! I didn’t realizie I could pull mulitple JSON pages right into Refine in one step. This saves me a bunch of time. Looking forward to your GEPHI post.

Great resource, but what if you want to map retweets as well as normal interactions?