I know, I know, the local elections are old news now, but elections come round again and again, which means building up a set of case examples of what we might be able to do – data wise – around elections in the future could be handy…

So here’s one example of a data-related question we might ask (where in this case by data I mean “information available in: a) electronic form, that b) can be represented in a structured way): are the candidates standing in different seats local to that ward/electoral division?. By “local”, I mean – can they vote in that ward by virtue of having a home address that lays within that ward?

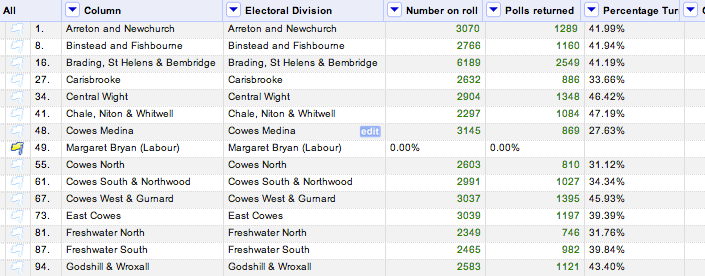

Here’s what the original data for my own local council (the Isle of Wight council, a unitary authority) looked like – a multi-page PDF document collating the Notice of polls for each electoral division (archive copy):

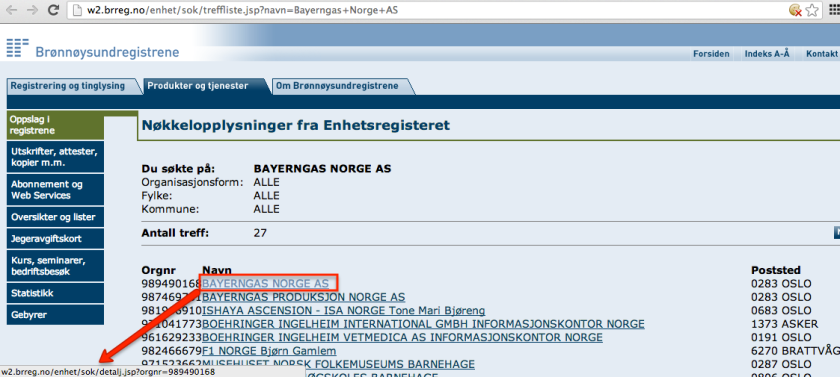

Although it’s a PDF, the document is reasonably nicely structured for scraping (I’ll do a post on this over the next week or two) – you can find a Scraperwiki scraper here. I pull out three sorts of data – information about the polling stations (the table at the bottom of the page), information about the signatories (of which, more in a later post…;-), and information about the candidates, including the electoral division in which they were standing (the “ward” column) and a home address for them, as shown here:

So what might we be able to do with this information? Does the home address take us anywhere interesting? Maybe. If we can easily look up the electoral division the home addresses fall in, we have a handful of news story search opportunities: 1) to what extent are candidates – and election winners – “local”? 2) do any of the parties appear to favour standing in/out of ward candidates? 3) if candidates are standing out of their home ward, why? If we complement the data with information about the number of votes cast for each candidate, might we be able to find any patterns suggestive of a beneficial or detrimental effect living within, or outside of, the electoral division a candidate is standing in, and so on.

In this post, I’ll describe a way of having a conversation with the data using OpenRefine and Google Fusion Tables as a way of starting to explore some the stories we may be able to tell with, and around, the data. (Bruce Mcphereson/Excel Liberation blog has also posted an Excel version of the methods described in the post: Mashing up electoral data. Thanks, Bruce:-)

Let’s get the data into OpenRefine so we can start to work it. Scraperwiki provides a CSV output format for each scraper table, so we can get a URL for it that we can then use to pull the data into OpenRefine:

In OpenRefine, we can Create a New Project and then import the data directly:

The data is in comma separated CSV format, so let’s specify that:

We can then name and create the project and we’re ready to start…

…but start what? If we want to find out if a candidate lives in ward or out of ward, we either need to know whether their address is in ward or out of ward, or we need to find out which ward their address is in and then see if it is the same as the one they are standing in.

Now it just so happens (:-) that MySociety run a service called MapIt that lets you submit a postcode and it tells you a whole host of things about what administrative areas that postcode is in, including (in this case) the unitary authority electoral division.

And what’s more, MapIt also makes the data available in a format that’s data ready for OpenRefine to be able to read at a web address (aka a URL) that we can construct from a postcode:

Here’s an example of just such a web address: http://mapit.mysociety.org/postcode/PO36%200JT

Can you see the postcode in there? http://mapit.mysociety.org/postcode/PO36%200JT

The %20 is a character encoding for a space. In this case, we can also use a +.

So – to get information about the electoral division an address lays in, we need to get the postcode, construct a URL to pull down corresponding data from MapIt, and then figure out some way to get the electoral division name out of the data. But one step at a time, eh?!;-)

Hmmm…I wonder if postcode areas necessarily fall within electoral divisions? I can imagine (though it may be incorrect to do so!) a situation where a division boundary falls within a postcode area, so we need to be suspicious about the result, or at least bear in mind that an address falling near a division boundary may be wrongly classified. (I guess if we plot postcodes on a map, we could look to see how close to the boundary line they are, because we already know how to plot boundary lines.

To grab the postcode, a quick skim of the addresses suggests that they are written in a standard way – the postcode always seems to appear at the end of the string preceded by a comma. We can use this information to extract the postcode, by splitting the address at each comma into an ordered list of chunks, then picking the last item in the list. Because the postcode might be preceded by a space character, it’s often convenient for us to strip() any white space surrounding it.

What we want to do then is to create a new, derived column based on the address:

And we do this by creating a list of comma separated chunks from the address, picking the last one (by counting backwards from the end of the list), and then stripping off any whitespace/space characters that surround it:

Here’s the result…

Having got the postcode, we can now generate a URL from it and then pull down the data from each URL:

When constructing the web address, we need to remember to encode the postcode by escaping it so as not to break the URL:

The throttle value slows down the rate at which OpenRefine loads in data from the URLs. If we set it to 500 milliseconds, it will load one page every half a second.

When it’s loaded in all the data, we get a new column, filled with data from the MapIt service…

We now need to parse this data (which is in a JSON format) to pull out the electoral division. There’s a bit of jiggery pokery required to do this, and I couldn’t work it out myself at first, but Stack Overflow came to the rescue:

We need to tweak that expression slightly by first grabbing the areas data from the full set of MapIt data. Here’s the expression I used:

filter(('[' + (value.parseJson()['areas'].replace( /"[0-9]+":/,""))[1,-1] + ']' ).parseJson(), v, v['type']=='UTE' )[0]['name']

to create a new column containing the electoral division:

Now we can create another column, this time based on the new Electoral Division column, that compares the value against the corresponding original “ward” column value (i.e. the electoral division the candidate was standing in) and prints a message saying whether they were standing in ward or out:

If we collapse down the spare columns, we get a clearer picture:

Like this:

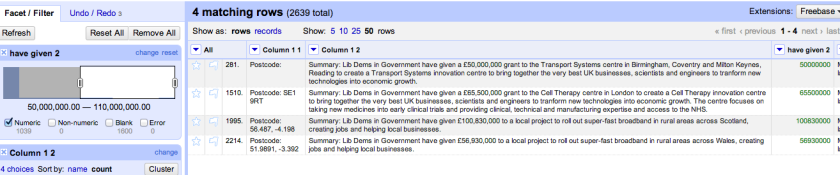

If we generate a text facet on the In/Out column, and increase the number of rows displayed, we can filter the results to show just the candidates who stood in their local electoral division (or conversely, those who stood outside it):

We can also start to get investigative, and ask some more questions of the data. For example, we could apply a text facet on the party/desc column to let us filter the results even more…

Hmmm… were most of the Labour Party candidates standing outside their home division (and hence unable to vote for themselves?!)

There aren’t too many parties represented across the Island elections (a text facet on the desc/party description column should reveal them all), so it wouldn’t be too hard to treat the data as a source, get paper and pen in hand, and write down the in/out counts for each party describing the extent to which they fielded candidates who lived in the electoral divisions they were standing in (and as such, could vote for themselves!) versus those who lived “outside”. This data could reasonably be displayed using a staggered bar chart (the data collection and plotting are left as an exercise for the reader [See Bruce Mcphereson’s Mashing up electoral data post for a stacked bar chart view.];-) Another possible questioning line is how do the different electoral divisions fare in terms of in-vs-out resident candidates. If we pull in affluence/poverty data, might it tell us anything about the likelihood of candidates living in area, or even tell us something about the likely socio-economic standing of the candidates?

One more thing we could try to do is to geocode the postcode of the address of the each candidate rather more exactly. A blog post by Ordnance Survey blogger John Goodwin (@gothwin) shows how we might do this (note: copying the code from John’s post won’t necessarily work; WordPress has a tendency to replace single quotes with all manner of exotic punctuation marks that f**k things up when you copy and paste them into froms for use in other contexts). When we “Add column by fetching URLs”, we should use something along the lines of the following:

'http://beta.data.ordnancesurvey.co.uk/datasets/code-point-open/apis/search?output=json&query=' + escape(value,'url')

The data, as imported from the Ordnance Survey, looks something like this:

As is the way of national services, the Ordnance Survey returns a data format that is all well and good but isn’t the one that mortals use. Many of my geo-recipes rely on latitude and longitude co-ordinates, but the call to the Ordnance Survey API returns Eastings and Northings.

Fortunately, Paul Bradshaw had come across this problem before (How to: Convert Easting/Northing into Lat/Long for an Interactive Map) and bludgeoned(?!;-) Stuart harrison/@pezholio, ex- of Lichfield Council, now of the Open Data Institute, to produce a pop-up service that returns lat/long co-ordinates in exchange for a Northing/Easting pair.

The service relies on URLs of the form http://www.uk-postcodes.com/eastingnorthing.php?easting=EASTING&northing=NORTHING, which we can construct from data returned from the Ordnance Survey API:

Here’s what the returned lat/long data looks like:

We can then create a new column derived from this JSON data by parsing it as follows

A similar trick can be used to generate a column containing just the longitude data.

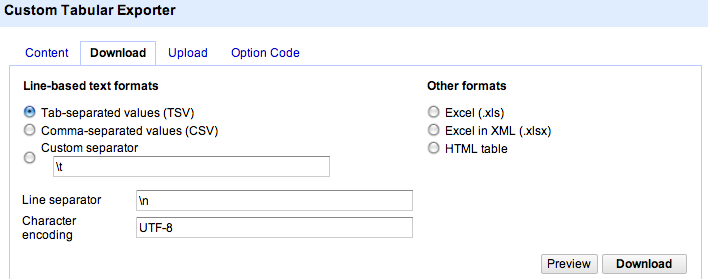

We can then export a view over the data to a CSV file, or direct to Google Fusion tables.

With the data in Google Fusion Tables, we can let Fusion Tables know that the Postcode lat and Postcode long columns define a location:2222

Specifically, we pick either the lat or the long column and use it to cast a two column latitude and longitude location type:

We can inspect the location data using a more convenient “natural” view over it…

By applying a filter, we can look to see where the candidates for a particular ward have declared their home address to be:

(Note – it would be more useful to plot these markers over a boundary line defined region corresponding to the area covered by the corresponding electoral ward. I don’t think Fusion Table lets you do this directly (or if it does, I don’t know how to do it..!). This workaround – FusionTablesLayer Wizard – on merging outputs from Fusion Tables as separate layers on a Google Map is the closest I’ve found following a not very thorough search;-)

We can go back to the tabular view in Fusion Tables to run a filter to see who the candidates were in a particular electoral division, or we can go back to OpenRefine and run a filter (or a facet) on the ward column to see who the candidates were:

Filtering on some of the other wards using local knowledge (i.e. using the filter to check/corroborate things I knew), I spotted a couple of missing markers. Going back to the OpenRefine view of the data, I ran a facetted view on the postcode to see if there were any “none-postcodes” there that would in turn break the Ordnance Survey postcode geocoding/lookup:

Ah – oops… It seems we have a “data quality” issue, although albeit a minor one…

So, what do we learn from all this? One take away for me is that data is a source we can ask questions of. If we have a story or angle in mind, we can tune our questions to tease out corroborating facts (possbily! caveat emptor applies!) that might confirm, helpdevelop, or even cause us to rethink, the story we are working towards telling based on the support the data gives us.