Way back when, when I first started blogging, I tried to push the idea of “live documents” that supported transclusion of content from elsewhere (e.g. Keeping Courses Current with Live Links; there was also a demo, but I think it’s rotted…?) A couple of days ago, Owen Stephens (re)introduced me to the notion of literate programming, “a methodology that combines a programming language with a documentation language”. The context was active reading of reactive documents, in which a reader interacts with a document that contains human readable paragraphs that describe some sort of mathematical or logical model which is embedded in the text as interactive, parameterised elements. (I can’t give a demo in this WordPress.com hosted blog because what I am allowed to do is really locked down… so to see what I’m talking about, check out the Explorable Explanations) example document… I can wait…)

Done that? Up to speed now?

I’d also come across the reactive document model recently through seeing a link (form somewhere… I can’t remember where now?:-(, to the Javascript library that was used to implement Explorable explanations: Tangle. (Having a play with it is very much on my to do list…)

My immediate impression was that it reminded me of the interactive, browser based programming style (e.g. Online Apps for Live Code Tutorials/Demos), in which learners can read and run, edit and run, and write and run, code examples in the browser (or more generally, in the context of an “electronic study guide” (eSG). It also brought to mind similarities with dexy.it and Sweave, a couple of (literate programming;-) frameworks that allow you to include programme code within a document and them execute it in order to produce an output that also appears in the document. (I remember one of the joys of course writing for an eSG is that you often have to hand over the text (including code and output in situ), a text file containing the code (for testing), and a text file containing the output. If (when) an error is found, version control across the various files can be come really problematic. Far easier if the document were to include code fragments that are then executed and used to produce the actual output that is in turn piped directy into the final document.) Wolfram’s Computable Document Format also comes to mind, as a document format that allows a reader to express executable mathematical statements, whether formally specified or, increasingly, using natural language.

So the document space I’m imagining here is one in which the document contains one or more components that are generated in response to some sort of request from an operational part of the document, or a part of the document that encodes some sort of performative action[?????], such as a search term that is used to trigger a search whose results are then included within the page, a piece of programme code that can be executed in order to generate an output, or a parameter for a model that can be run with the specified parameter value in order to produce an output that is rendered live within the document.

For example, this might include a ‘live’ document, that transcludes content from an external source:

A literate programme, that combines:

– some explanatory text;

– fragments of, or complete, programmes;

– the output of the programme.

Or a reactive document which contains:

– some explanatory text;

– parameterised programme code, or a parameterised mathematical or logical model; the code/model should also be executable, using parameter values specified by the reader;

– the output from executing the code or model.

(I guess a live document might be viewed as reactive in certain cases, for example, when a user specifies a search term or query that determines what content is pulled live into a document from an external source.)

There is something almost cell like going on here, in that part of the document contains the instructions that some document machinery can process in order to produce other parts of the document…

One obvious use case for living documents is in educational materials. For a long time now (even before the time of education CD-ROMs;-), eLearning materials have included interactive components. But these have often be external components that have been slotted in to the educational text, rather than being generated from the execution of a specified part of the the text. For example, many OU course materials include interactive self-assessment questions, or Flash based interactive exercises (hmm… I wonder when these are going to be rebranded as edu-apps and made available, for a fee, or via open license, in an OU edu-app market;-) [Note: the OU used to be a pretty significant educational software house in terms of output, with large numbers of highly skilled educational software developers who knew how to turn out software that worked in educational terms… but that was before the VLE came along…;-)]

Another use case is the area of data journalism. A criticism of many interactive visulisations produced to support news stories is that whilst they’re all very nice and shiny, they don’t actually work that well to communicate anything of substance at all (for example, see my comments on Michael Blastland’s talk at the OU Stats conference). Maybe a few well crafted reactive documents might start to address this balance, and engage at least part of the audience in a contextualised consideration of data (or model) based story…?

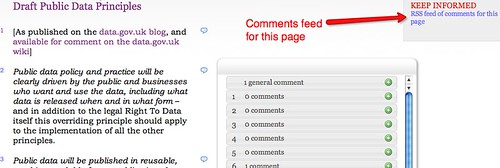

A third area I’d like to spend some time mulling over (maybe even in the context of Public Platforms…?) is policy development and public consultation, scoping out what may be possible and plausible if consultation documents were to propose particular models and then allow the engaged reader to explore the various parameter regimes associated with those models?

Hmmm…. maybe I need to start working on my resolutions for next year…?!

PS just in passing, as well as treating documents as living things, it can also be instructive to think of them as databases. This is a trivial mapping if the document has a regular tabluar structure, such as a spreadsheet sheet, or is otherwise formally structured (as for example in the case of an XML document, which typically describes some sort of hierarchical (document as data) structure) ,or even if it contains conventions in either style or content (for example, section headings being phrased in the form “Section NN: blah blah blah”; “Section NN: ” is a convention that can be used to identify the semantics of the text “blah blah blah” (in this case, as the text representing the header of section NN).