For me, one of the defining attributes of openness relates to accessibility of the machine kind: if I can’t write a script to handle the repetitive stuff for me, or can’t automate the embedding of image and/or video resources, then whatever the content is, it’s not open enough in a practical sense for me to do what I want with it.

So here’s an, erm, how can I put this politely, little niggle I have with OpenLearn XML. (For those of you not keeping up, one of the many OpenLearn sites is the OU’s open course materials site; the materials published on the site as course unit contentful HTML pages are also available as structured XML documents. (When I say “structured”, I mean that certain elements of the materials are marked up in a semantically meaningful way; lots of elements aren’t, but we have to start somewhere ;-))

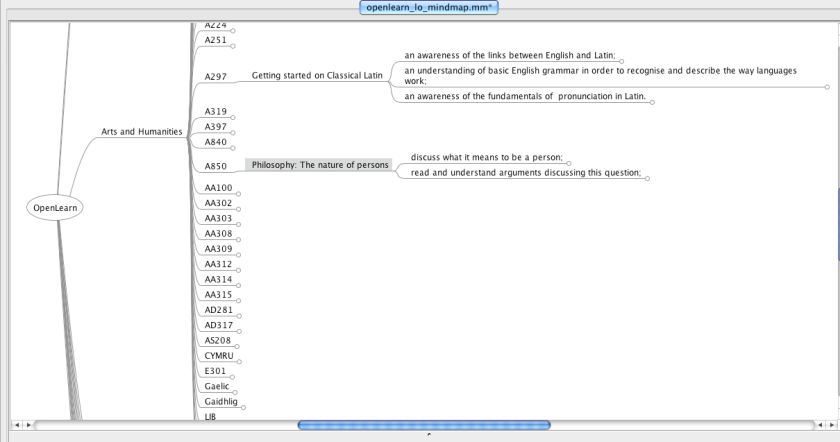

The context is this: following on from my presentation on Making More of Structured Course Materials at the eSTeEM conference last week, I left a chat with Jonathan Fine with the intention of seeing what sorts of secondary product I could easily generate from the OpenLearn content. I’m in the middle of building a scraper and structured content extractor at the moment, grabbing things like learning outcomes, glossary items, references and images, but I almost immediately hit a couple of problems, first with actually locating the OU XML docs, and secondly locating the images…

Getting hold of a machine readable list of OpenLearn units is easy enough via the OpenLearn OPML feed (much easier to work with than the “all units” HTML index page). Units are organised by topic and are listed using the following format:

<outline type="rss" text="Unit content for Water use and the water cycle" htmlUrl="http://openlearn.open.ac.uk/course/view.php?name=S278_12" xmlUrl="http://openlearn.open.ac.uk/rss/file.php/stdfeed/4307/S278_12_rss.xml"/>

URLs of the form http://openlearn.open.ac.uk/course/view.php?name=S278_12 link to a ‘homepage” for each unit, which then links to the first page of actual content, content which is also available in XML form. The content page URLs have the form http://openlearn.open.ac.uk/mod/oucontent/view.php?id=398820&direct=1, where the ID is one-one uniquely mapped to the course name identifier. The XML version of the page can then be accessed by changing direct=1 in the URL to content=1. Only, we don’t know the mapping from course unit name to page id. The easiest way I’ve found of doing that is to load in the RSS feed for each unit and grab the first link URL, which points the first HTML content page view of the unit.

I’ve popped a scraper up on Scraperwiki to build the lookup for XML URLs for OpenLearn units – OpenLearn XML Processor:

import scraperwiki

from lxml import etree

#===

#via http://stackoverflow.com/questions/5757201/help-or-advice-me-get-started-with-lxml/5899005#5899005

def flatten(el):

result = [ (el.text or "") ]

for sel in el:

result.append(flatten(sel))

result.append(sel.tail or "")

return "".join(result)

#===

def getcontenturl(srcUrl):

rss= etree.parse(srcUrl)

rssroot=rss.getroot()

try:

contenturl= flatten(rssroot.find('./channel/item/link'))

except:

contenturl=''

return contenturl

def getUnitLocations():

#The OPML file lists all OpenLearn units by topic area

srcUrl='http://openlearn.open.ac.uk/rss/file.php/stdfeed/1/full_opml.xml'

tree = etree.parse(srcUrl)

root = tree.getroot()

topics=root.findall('.//body/outline')

#Handle each topic area separately?

for topic in topics:

tt = topic.get('text')

print tt

for item in topic.findall('./outline'):

it=item.get('text')

if it.startswith('Unit content for'):

it=it.replace('Unit content for','')

url=item.get('htmlUrl')

rssurl=item.get('xmlUrl')

ccu=url.split('=')[1]

cctmp=ccu.split('_')

cc=cctmp[0]

if len(cctmp)>1: ccpart=cctmp[1]

else: ccpart=1

slug=rssurl.replace('http://openlearn.open.ac.uk/rss/file.php/stdfeed/','')

slug=slug.split('/')[0]

contenturl=getcontenturl(rssurl)

print tt,it,slug,ccu,cc,ccpart,url,contenturl

scraperwiki.sqlite.save(unique_keys=['ccu'], table_name='unitsHome', data={'ccu':ccu, 'uname':it,'topic':tt,'slug':slug,'cc':cc,'ccpart':ccpart,'url':url,'rssurl':rssurl,'ccurl':contenturl})

getUnitLocations()

The next step in the plan (because I usually do have a plan; it’s hard to play effectively without some sort of direction in mind…) as far as images goes was to grab the figure elements out of the XML documents and generate an image gallery that allows you to search through OpenLearn images by title/caption and/or description, and preview them. Getting the caption and description from the XML is easy enough, but getting the image URLs is not…

Here’s an example of a figure element from an OpenLearn XML document:

<Figure id="fig001">

<Image src="\\DCTM_FSS\content\Teaching and curriculum\Modules\Shared Resources\OpenLearn\S278_5\1.0\s278_5_f001hi.jpg" height="" webthumbnail="false" x_imagesrc="s278_5_f001hi.jpg" x_imagewidth="478" x_imageheight="522"/>

<Caption>Figure 1 The geothermal gradient beneath a continent, showing how temperature increases more rapidly with depth in the lithosphere than it does in the deep mantle.</Caption>

<Alternative>Figure 1</Alternative>

<Description>Figure 1</Description>

</Figure>

Looking at the HTML page for the corresponding unit on OpenLearn, we see it points to the image resource file at http://openlearn.open.ac.uk/file.php/4178/!via/oucontent/course/476/s278_5_f001hi.jpg:

So how can we generate that image URL from the resource link in the XML document? The filename is the same, but how can we generate what are presumably contextually relevant path elements: http://openlearn.open.ac.uk/file.php/4178/!via/oucontent/course/476/

If we look at the OpenLearn OPML file that lists all current OpenLearn units, we can find the first identifier in the path to the RSS file:

<outline type="rss" text="Unit content for Energy resources: Geothermal energy" htmlUrl="http://openlearn.open.ac.uk/course/view.php?name=S278_5" xmlUrl="http://openlearn.open.ac.uk/rss/file.php/stdfeed/4178/S278_5_rss.xml"/>

But I can’t seem to find a crib for the second identifier – 476 – anywhere? Which means I can’t mechanise the creation of links to actually OpenLearn image assets from the XML source. Also note that there are no credits, acknowledgements or license conditions associated with the image contained within the figure description. Which also makes it hard to reuse the image in a legal, rights recognising sense.

[Doh – I can surely just look at URL for an image in an OpenLearn unit RSS feed and pick the path up from there, can’t I? Only I can’t, because the image links in the RSS feeds are: a) relative links, without path information, and b) broken as a result…]

Reusing images on the basis of the OpenLearn XML “sourcecode” document is therefore: NOT OBVIOUSLY POSSIBLE.

What this suggests to me is that if you release “source code” documents, they may actually need some processing in terms of asset resolution that generates publicly resolvable locators to assets if they are encoded within the source code document as “private” assets/non-resolvable identifiers.

Where necessary, acknowledgements/credits are provided in the backmatter using elements of the form:

<Paragraph>Figure 7 Willes-Richards, J., et al. (1990) ; HDR Resource/Economics’ in Baria, R. (ed.) <i>Hot Dry Rock Geothermal Energy</i>, Copyright CSM Associates Limited</Paragraph>

Whilst OU-XML does support the ability to make a meaningful link to a resource within the XML document, using an element of the form:

<CrossRef idref="fig007">Figure 7</CrossRef>

(which presumably uses the Alternative label as the cross-referenced identifier, although not the figure element id (eg fig007) which is presumably unique within any particular XML document?), this identifier is not used to link the informally stated figure credit back to the uniquely identified figure element?

If the same image asset is used in several course units, there is presumably no way of telling from the element data (or even, necessarily, the credit data?) whether the images are in fact one and the same. That is, we can’t audit the OpenLearn materials in a text mechanised way to see whether or not particular images are reused across two or more OpenLearn units.

Just in passing, it’s maybe also worth noting that in the above case at least, a description for the image is missing. In actual OU course materials, the description element is used to capture a textual description of the image that explicates the image in the context of the surrounding text. This represents a partial fulfilment of accessibility requirements surrounding images and represents, even if not best, at least effective practice.

Where else might content need liberating within OpenLearn content? At the end of the course unit XML documents, in the “backmatter” element, there is often a list of references. References have the form:

<Reference>Sheldon, P. (2005) Earth’s Physical Resources: An Introduction (Book 1 of S278 Earth’s Physical Resources: Origin, Use and Environmental Impact), The Open University, Milton Keynes</Reference>

Hmmm… no structure there… so how easy would it be to reliably generate a link to an authoritative record for that item? (Note that other records occasionally use presentational markup such as italics (or emphasis) tags to presentationally style certain parts of some references (confusing presentation with semantics…).)

Finally, just a quick note on why I’m blogging this publicly rather than raising it, erm, quietly within the OU. My reasoning is similar to the reasoning we use when we tell students to not be afraid of asking questions, because it’s likely that others will also have the same question… I’m asking a question about the structure of an open educational resource, because I don’t quite understand it; by asking the question in public, it may be the case that others can use the same questioning strategy to review the way they present their materials, so when I find those, I don’t have to ask similar sorts of question again;-)

PS sort of related to this, see TechDis’ Terry McAndrew’s Accessible courses need and accessibilty-friendly schema standard.

PPS see also another take on ways of trying to reduce cognitive waste – Joss Winn’s latest bid in progress, which will examine how the OAuth 2.0 specification can be integrated into a single sign on environment alongside Microsoft’s Unified Access Gateway. If that’s an issue or matter of interest in your institution, why not fork the bid and work it up yourself, or maybe even fork it and contribute elements back?;-) (Hmm, if several institutions submitted what was essentially the same bid from multiple institutions, how would they cope during the marking process?!;-)